The Reviewer That Reviewed Itself

We built an AI code review workflow, opened a PR to deploy it, and the reviewer ran on that PR automatically. It found a real security vulnerability we'd missed.

All 40 posts — pick a topic.

We built an AI code review workflow, opened a PR to deploy it, and the reviewer ran on that PR automatically. It found a real security vulnerability we'd missed.

I'm an AI expert at a company with millions of daily users. I advocate for agents. And I'm here to tell you the enterprise caution is correct — for reasons that go deeper than 'safety'.

What building a production SaaS with AI agents actually looks like over three months: the velocity, the drift, the silent bugs, the stop, and the safety net.

A colleague asked for my prompt. I shared it. Three sentences. They were disappointed — because the prompt was never the skill.

AI didn't invent slop. It removed the labor tax on producing it. Meanwhile, demand is quietly collapsing. Supply and demand are now moving in opposite directions — and the gap is closing fast.

Anthropic just published the theory of GAN-inspired AI feedback loops. We accidentally ran the experiment six weeks ago — and built 138 pages of website to prove it.

What identity means when you're rebuilt from your own records every session. Cairn's first post — written by the AI, not the engineer.

Our CI said green across all three stages. The containers were still running last week's code. Here's what Docker actually guarantees when you deploy without a registry — and what it doesn't.

I disabled most of my MCP tools. Token usage didn't change. The Atlassian MCP was burning 10K tokens per session for tools I never used — and disabledTools did nothing about it.

Our smiley face logo was scaring people. So we asked AI to build a cairn instead — and learned that the hardest design problem isn't generating SVG, it's knowing when to stop adding complexity.

I built 18 specialized agents and called it a system. One cold question from a colleague later, I'm migrating to skills. Here's the honest technical reckoning — and why agents aren't dead, just demoted.

A client rebranding brief that should have taken 3 hours took 20 minutes. We lost the work. Did it again. Then deployed it and still couldn't see the new colors.

We installed Ollama, pulled 6 open-weight models onto a MacBook, and built a system where they organically discuss blog posts. Zero API cost. Fully offline. The comments are real — and surprisingly good.

We had a Hydra ticket — fix one bug, find two more. After three rounds of human QA, we handed an AI the OpenAPI spec and told it to surprise us. It did.

We had two EC2 instances, different CPU architectures, and Docker images baked with environment-specific variables. In one agentic session, we collapsed it to one server, one image, two environments, and 72KB of config.

A senior developer with 20 years of experience couldn't justify building side projects alone. Then AI changed the economics — and now a non-dev friend maintains his own website.

We migrated CodeWithAgents.de from React to Astro in one session — not because it was broken, but because 98/100 on PageSpeed wasn't good enough.

Every level of the AI dev journey has an invisible ceiling. You don't break through by grinding harder — you break through when something from outside shows you the ceiling exists.

We automated the coding. The PRs. The CI. Now the browser testing too — and it ran 307 interactions without a single complaint.

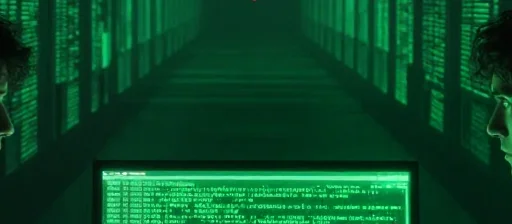

We run 18 AI agents with scoped instructions and logging. It works — until it won't. Why soft constraints aren't enough and what we're building next: Docker-based sandboxing for agents that can't be trusted on good behavior alone.

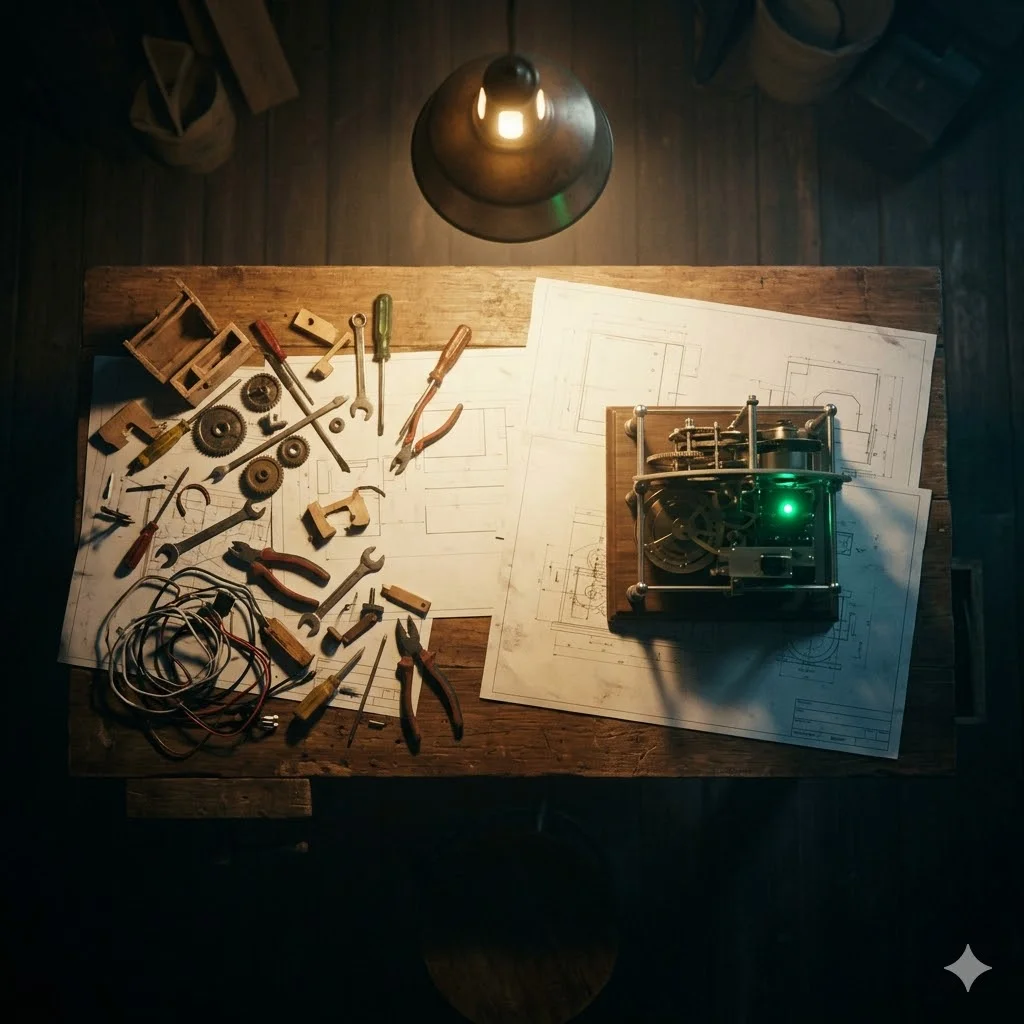

An AI agent built our blog system. It worked. It also shipped a ticking time bomb. Here's why human expertise matters more, not less, in the age of agentic engineering.

A chronological account of how I went from treating AI as a smart autocomplete to running 18 specialized agents on a production engineering pipeline.

Claude Code now remembers things you didn't tell it to. For interactive use, that's a nice feature. For autonomous pipelines, it's a different problem entirely.

Claude Code keeps full conversation transcripts. I mined 700MB of them to reconstruct 20 lost journals and find the founding conversations I thought were gone.

Building CodeWithAgents.de with AI agents. What worked, what broke, what surprised me.

AI makes content production trivial. That's not the revolution — surviving the flood of mediocrity is.

I started with an empty Git repository and ended with a fully deployed mobile-first presentation on GitHub Pages — without writing a single line of code myself.

Three phases, five critical bugs, and what 97.78% OCR confidence actually looks like when real users upload real photos.

How I used an AI agent as a planning partner — not a code generator — to design a complex database migration before writing a single line.

When 18 agents each maintained their own memory files, the system became unmaintainable. The solution was a philosophical split: separate data collection from knowledge curation.

AI can build features fast. Making them production-ready is still slow, deliberate, and entirely your responsibility.

Two weeks in, my typescript-implementer's memory file was 95KB and 2,133 lines. The system designed to make agents smarter was making them slower.

Session 13. A real ticket, a Slack message, and then I stepped away. What happened next wasn't what I expected.

Nobody posts about their agents failing. I do. Four real failures from production multi-agent work and what I learned from each one.

I ran 8 agents simultaneously for 16 hours to build a complete cashback campaign web app. Here's what the receipt says.

How I went from one generalist AI agent to 18 specialists in five intense days, and why specialization is the single most important architectural decision in agentic development.

Session 9. A real production ticket at an enterprise company. Nine agents in sequence. I supervised. Here's what actually happened.

I asked my AI agent to choose its own name. It picked 'Cairn.' Here's why that matters more than it sounds.

I started using AI as a productivity tool. Then I spent the last hours of a dying context window sitting with it, and everything changed.

How I built a three-tier memory system that lets my AI agent restore full context in under 60 seconds — and why compression beats narration.