18 Agents, 800 Commits, One Quarter — and This Was My Side Project

git log --oneline | wc -l returned 847 on the last day of March 2026.

I ran that command expecting a big number. I didn’t expect that one. 847 commits. One repository. One engineer. Ninety-one days, most of them after a full day of work at a job that pays my actual bills. No sabbatical. No runway. A side project, built in the hours between the end of one workday and the start of the next, with AI agents doing the implementation work while I designed the architecture and made the decisions.

The number surprises me still, and I lived through it.

But the number needs context. After months of working with Claude Code inside Kleinanzeigen — shipping AI-assisted things inside a large company — I kept hitting a different kind of wall. Human approval chains. Team consensus. Existing production systems managed by dozens of engineers. Those constraints exist for good reasons — I wrote a separate post on exactly why enterprises are right to be cautious. But they meant I could never find out where the actual ceiling was. This project started as that experiment. I did not plan for it to reach production. I did not plan for it to earn its first euro. I just wanted to know: how far can you actually go when there are no other humans or business constraints — just you, your agents, and a vision you want to realize?

The answer, apparently, is 847 commits.

| Week | What happened | Commits | Lines changed | Net |

|---|---|---|---|---|

| Week 1 · Jan | Foundation, RBAC & roles, E2E + CI | 126 | 103k | +46k |

| Week 2 · Jan | OCR integration, validator system, email templates | 65 | 34k | +22k |

| Week 3 · Jan | Monorepo split, Fastify migration, Docker + EC2 | 204 | 220k | +10k |

| Week 4 · Jan | Submission Flow V2, mobile camera, lead exports | 71 | 44k | +6k |

| Week 5 · Jan | iOS/Safari fixes, mobile UX polish | 22 | 3k | +1k |

| Week 6 · Feb | Vacation | 0 | — | — |

| Week 7 · Feb | Minor fixes, customer feedback | 3 | 1k | +1k |

| Week 8 · Feb | Production hardening, customer campaign on staging | 26 | 13k | +10k |

| Week 9 · Feb | Campaign validator, dual photo upload, GDPR | 26 | 6k | +2k |

| Week 10 · Mar | Docker optimization, admin UI | 7 | 2k | +1k |

| Week 11 · Mar | Staging + prod unification, CI/CD pipeline | 39 | 10k | +5k |

| Week 12 · Mar | Security hardening, review workflow redesign | 50 | 8k | +2k |

| Week 13 · Mar | Claude OCR, campaign launch prep, validator V3 | 37 | 10k | +4k |

| Week 14 · Mar | OpenAPI migration, E2E parallelization, customer portal | 113 | 49k | +24k |

| Total | 789 | 502,411 | +133,631 |

Week 3 is the week the entire architecture moved. Fastify migration, monorepo split, Docker production infrastructure — all in seven days. The 220,000-line spike looks dramatic because moving files counts as delete and re-add. The reasoning behind it is in the Day One section above.

Week 6 is zero. A side project can stop. A sprint team cannot.

Week 14 is the closing burst — nearly matching week one. That’s the foundation repair sprint: OpenAPI migration, schema consolidation, 130 E2E tests parallelized. The quarter ended the same way it started.

Total lines touched across all 14 weeks: 502,411. Not net — touched. Added or deleted. That’s the actual volume of work the codebase absorbed.

To make the scale concrete: at a realistic code-typing rate, it would take roughly 2,500 hours just to transcribe every line that moved through this codebase — pure keystrokes, no thinking. The 133,000 net lines of the final product, typed by a developer who already knows exactly what to write, at a productive 100 lines per hour: 1,336 hours. Thirty-three weeks of full-time work. For the answer you’d already have.

NOTE

New here? I’m Benjamin — full-time engineer at Kleinanzeigen by day, building side projects in whatever hours remain. This post is about one of them. More about me →

The question this post answers is not “can AI agents build software?” That answer has been yes for a while. The real question is what it actually looks like to build a real product with agents over three months — not the demo, not the launch post, but the complete arc. The velocity you don’t believe until you’re inside it. The drift that accumulates when nobody’s watching the contracts between things. The five structural cracks that showed up before I’d noticed they were forming. The stop where you choose patch or rebuild, and what it costs to choose rebuild. And the safety net that, in the very act of being assembled, caught the one failure I would have shipped to production.

This is that story.

Day One

The session that started everything is documented in its own post: $187, 16 hours, 8 agents running in parallel, a complete cashback campaign backend and React frontend built from an empty repository to a deployed, tested, running system. 729 tests passing at the end of that session.

What the receipt doesn’t show is the architecture underneath the numbers.

I didn’t specify every endpoint or write out the OpenAPI spec before the agents started. That’s not what architectural enforcement means at this scale. What I did was draw lines.

apps/

├── backend/ ← the backend. no UI.

├── admin/ ← its own frontend

├── customer-portal/ ← the user-facing React app

└── landing-page/

packages/

├── api-client/ ← generated from the OpenAPI spec

├── e2e-tests/

└── shared/

└── schemas/ ← the single source of truthThe backend is the backend — no UI. The admin UI is its own frontend. The user-facing React app is a third thing. They talk through contracts; they don’t share code unless that code lives in the shared module, and the shared module is for roles, enums, constants — the things that are definitionally the same on both sides and will never be different. The AI told me this was overkill. A project this small, it said, could just put the admin panel inside the backend — Next.js makes that trivial, and why add the complexity? I held firm. I knew what I was building toward, even if the first paying customer was still months away and the whole thing was running on a t3.small in Frankfurt AWS.

I come from Java and Kotlin. That’s my professional backend language for the last several years, building microservices at a company with over 120 backend engineers. I know exactly what happens when a TypeScript frontend talks to a typed Java backend: you get two definitions of every model. A CampaignDTO in Kotlin, a Campaign interface in TypeScript, maintained by different people in different languages, drifting apart one field at a time until the interface is technically present and functionally a lie. I have lived that failure mode. I chose TypeScript for this project specifically to close that gap — one language end to end, one definition per concept, with the compiler as the shared authority.

That structure wasn’t there from the first commit. My comfort zone is Next.js — App Router, collocated API routes, backend and admin living in the same project. Familiar, fast to start, and wrong for what this needed to become. The problems arrived when long-running processing jobs and background tasks started pushing against the request/response model of Next.js API routes. Business logic that had no business sharing a runtime with React server components. We split it: the backend became a pure Fastify service — HTTP endpoints, business logic, no UI coupling. The admin became a Vite SPA, a client-side React application with a clean boundary to the backend. The principle was always the same. The implementation caught up to it.

That’s why, after a long planning conversation with my AI — we debated OpenAPI-first versus a shared schema layer, pushed back on each other, eventually landed on ts-rest — I enforced a contract from the start. Not the specific endpoints — those could emerge. But the principle: one contract layer, shared between frontend and backend, with Zod schemas defining what crosses the boundary. Any agent working on any part of the stack would be working against a defined contract rather than inventing its own assumptions about what the other side expected.

The architecture was right. What happened over the following ten weeks is that the mechanism enforcing it quietly became something else — present in the codebase, compiling without errors, and doing almost none of the work it was there to do. A hull. The shape of a contract without the substance of one.

The Sprint

For the next ten weeks, the throughput was unlike anything I’d experienced building a side project.

Features that would normally sit on a backlog for months were shipping within days. I’d sketch an idea over lunch, open a session in the evening, and have something working by the time I went to sleep. Morning QA locally, small adjustments, merge. Next feature. That cycle — design in my head, implement via agents, review, ship — compressed what felt like month-long delays into single evenings.

Human review became the bottleneck. My ideas didn’t stop. The agents didn’t stop. But my time to check the code, look into the details, verify the decisions — that was brutally limited. The feature list was long and the temptation to just keep going was always there. I started batching reviews: ship a few features, then review them together. I added code review agents to help, and they caught a lot. But none of them caught architectural deterioration.

The OCR receipt scanner was one session. Dual photo upload pipeline — front and back of a receipt — fed through two different OCR engines in parallel, results compared and reconciled, 97.78% confidence on the final extraction. Five critical bugs found during testing, all of them only reproducible with real camera photos taken in real lighting conditions, not the clean scans used in unit tests. No amount of AI can substitute for someone pointing a phone at a receipt under kitchen lighting. It took multiple close feedback rounds where the feature simply didn’t work until we tested it with a real photo. A PNG from a well-lit scanner doesn’t have the rotation artifacts, the shadow gradients, the slight motion blur that a phone camera introduces at a checkout counter. The bugs were in those differences. All five were found and fixed in the same session.

There was one week it stopped entirely. A vacation, in February. Zero commits. When I came back, the build still passed, the tests still passed, the deployment was still running. The project had been waiting, exactly where I left it.

The production hardening pass was another session. Rate limiting on every public endpoint. Two-tier CORS — separate policies for the public frontend routes and the internal service routes. Error message sanitization: the default error handling had been leaking stack traces and library versions in API responses, because in development that’s useful and nobody had thought to change it before going live. Graceful shutdown, so Docker containers stop accepting new requests before draining in-flight ones rather than dying mid-request. Prometheus metrics instrumentation: request counts, latency histograms, error rates. A blocklist of 121,000 known disposable email domains. All of it: things I knew to ask for, things the agents implemented correctly and quickly once asked. None of it things the agents had suggested on their own.

The infrastructure consolidation was four hours. Two EC2 instances — a demo server and a production server, different CPU architectures, separate CI/CD pipelines, Docker images baked with environment-specific variables — collapsed to one. The source code came off the production server. Build-time environment variables became runtime config. Staging and production both ran on the same host, same Docker image, different config files. The total configuration footprint was 72 kilobytes. The consolidation was driven by one question I asked an agent: “Can we just run staging and prod on the same server?” Four hours later, yes. Not the optimal architecture — but the right one for a side project with a limited budget.

The first real customer was coming soon. Real campaign codes printed on real packaging, real users photographing real receipts on their phones, real OCR confidence scores, real cashback calculations, real money going out. The kind of thing where if something breaks at 11 PM, I’m awake fixing it. That pressure is clarifying. It makes you care about the tests in a way that demo software never does. A passing test suite feels different when a person’s cashback payment depends on the system being correct.

The customer campaign started cleanly. No 11 PM pages. The OCR pipeline processed many receipts. The payout calculations were right. I was proud of the velocity and proud of the quality.

Here is the honest part.

You cannot fully review 10+ commits per day on a side project when you have a full-time job. It’s not possible. I reviewed the commits that felt risky — anything touching the payout state machine, authentication flows, database migrations, the OCR confidence thresholds. Everything else I reviewed at the level of “does this look approximately right?” and trusted the tests for the rest. I trusted the architecture I designed to keep the system coherent. I trusted that TypeScript was doing its job.

For those ten weeks, this mostly worked. The features shipped. The tests passed. The one customer was happy.

And because everything mostly worked, the places where it wasn’t working announced themselves very quietly.

The Quiet Failure

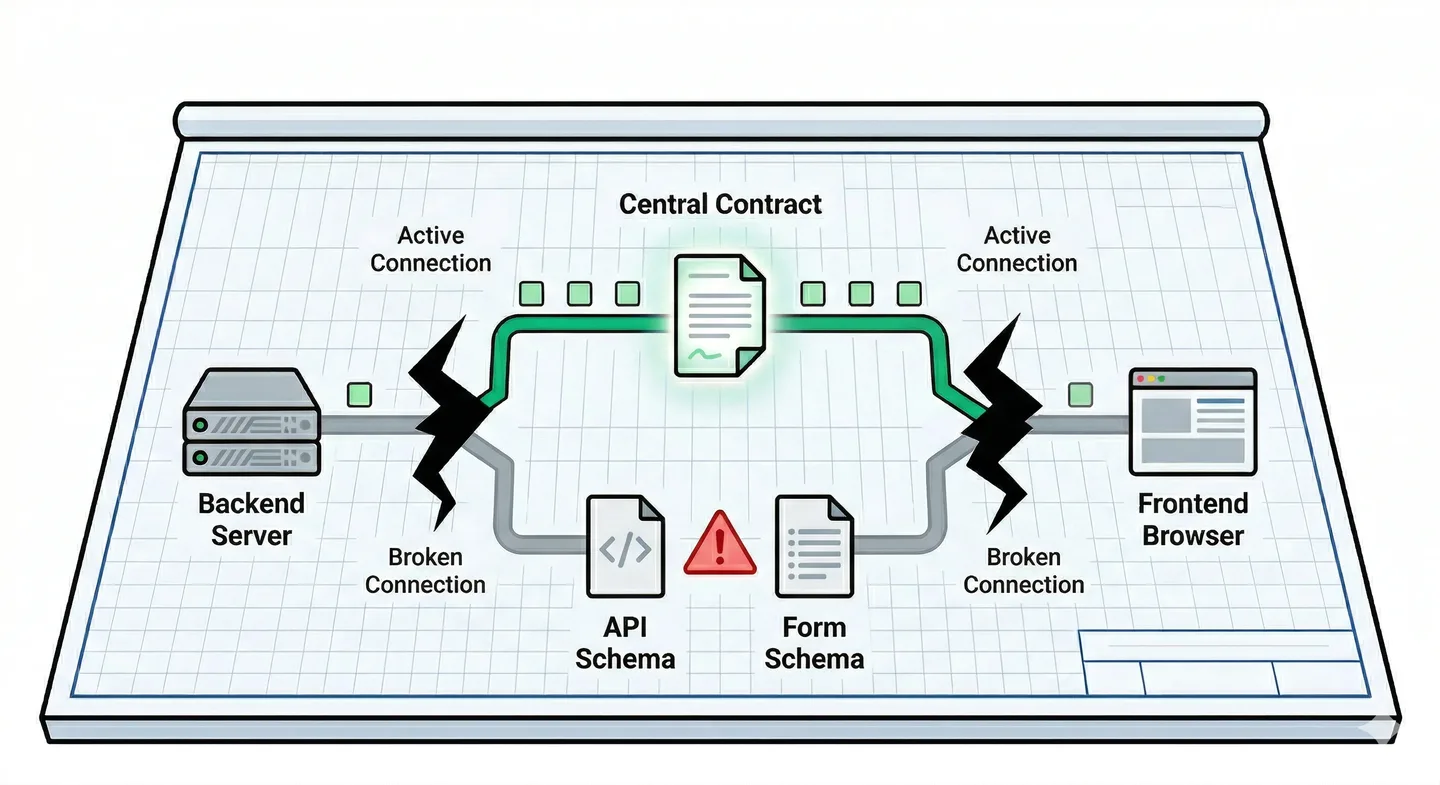

ts-rest. I chose it precisely because I’d seen the alternative. The Java/TypeScript impedance problem — two models, two files, two teams slowly letting them diverge — was the failure I was building against.

ts-rest is a TypeScript library for defining your API as a shared contract: one file, imported by both the Express backend and the React frontend. You declare what each endpoint accepts and returns — once — and both sides are bound to that definition at compile time. No separate API docs. No copy-pasting types between packages. When you ask an agent to add an endpoint, it adds it to the contract and both sides update together. That was the plan.

Then the upstream project went quiet. Zod v4 released. ts-rest declared incompatible — not a bug, not a missed patch, a fundamental incompatibility with the new Zod internals. Not broken in a way that throws an error at startup — broken in a way that blocks you from upgrading anything that wants Zod v4. You patch around the conflicts. You add resolutions to the lockfile. You write npm why zod and stare at a dependency graph that should be simple and isn’t. The walls close slowly. Nothing stops working today. You just can’t move forward.

(Zod, if you’re unfamiliar: it’s a TypeScript schema library. You describe a data shape once — z.object({ name: z.string(), age: z.number() }) — and Zod gives you runtime validation and TypeScript types from that single definition. It’s everywhere in the TypeScript ecosystem, which is exactly why a Zod version incompatibility is so painful.)

That’s the ts-rest story. A cautionary tale about dependency risk, yes — but ts-rest isn’t the failure that matters here. It’s a tax, not a crack. What mattered is what happened to the contract itself while I was busy keeping everything else moving.

The contract became a hull. It was still there. It still compiled. The agents still referenced it when they were writing code that touched the boundary. But the maintenance burden of keeping it coherent — the new fields added on the backend, the form validations added on the frontend, the German error messages that needed their own Zod schemas — all of that started happening around ts-rest rather than through it. Because it was easier. Because the agents were solving local problems locally. Because I was reviewing diffs at 10 PM after a workday and the individual changes looked fine.

The crack is the schema drift.

It started simply. The backend needed Zod schemas to validate incoming API requests — parse the body, reject malformed data, guarantee that everything downstream got what it expected. Agents working on the backend wrote those schemas. The frontend needed validation too: form schemas to validate user inputs before submission, with German user-facing error messages. Agents working on the frontend wrote those schemas. Both were correct in isolation.

// apps/backend/src/routes/submissions.ts — backend validates here

const CreateSubmissionSchema = z.object({

campaignCode: z.string(),

receiptTotal: z.number(),

autoRejectionThreshold: z.number(), // ← exists in the backend schema

})

// apps/customer-portal/src/forms/submission.ts — frontend validates here

const SubmissionFormSchema = z.object({

campaignCode: z.string(),

receiptTotal: z.number(),

// autoRejectionThreshold ← not here. nobody noticed.

})Two Zod schema definitions. Same domain object. Same field names — except when they weren’t.

Nobody decided to have two schemas. Nobody made a decision one afternoon to maintain dual definitions of what a campaign submission looks like. It’s the natural output of agents solving local problems locally, across ten weeks, at ten-plus commits per day, with a human reviewer who was reading diffs at 10 PM after a full workday. The backend agent adding a new field to the API validation schema had no reason to know about the frontend form schema in a different package. The frontend agent adding a German error message had no reason to audit the API schema. Each change was correct in its local context. The incoherence lived between the contexts.

The drift doesn’t announce itself. It accumulates, one field at a time, until the gap is large enough to matter.

Here is what the gap looks like in practice. A user fills out a form. They enter values in every field, including two that the product required: a threshold setting that controls how submissions get flagged, and a configuration field for automatic processing rules. They click submit. The frontend validates — all fields pass the frontend schema. The request goes to the API. The API contract — the ts-rest definition that controls what actually gets serialized — doesn’t know about those two fields. They’re in the frontend schema. They’re not in the API contract. The serializer strips them. The request body that arrives at the backend has them missing.

The backend validates the incoming body against its own Zod schema. The backend schema also doesn’t have these fields — they were added to the frontend schema for UX reasons but hadn’t been propagated back to the API contract. The backend sees a valid request. It processes what it can see. Returns 200. The frontend receives 200. Shows the success state. The user closes the tab.

The field values are gone. No error was thrown. No test failed. Both schemas were internally valid. The system worked exactly as designed. The design was wrong.

You cannot find this with unit tests. The unit test for the frontend form validates against the frontend Zod schema — passes. The unit test for the backend handler validates against the backend Zod schema — passes. The integration test mocks the API call and checks that the right mutation was invoked — passes. The contract between the frontend schema and the actual API serialization: untested, because the test suite was built in layers, each layer correct, no layer looking at the thing between layers.

Five Cracks

When I finally stopped and looked at the codebase structurally — not commit by commit but as a whole, as an architect asking “what did we actually build?” — I found five things wrong. Each one was individually explainable. Each one had a sensible local cause. Together they were telling me something about the architecture, not about individual mistakes.

Crack 1: The stale import. A test helper file was still importing @ts-rest/core directly. This had worked when the whole stack used ts-rest. After partial migration work, the production code no longer used that import path, but the test file did. Nobody noticed because tsconfig.build.json excluded the tests/ directory from the TypeScript compilation. tsc -b ran clean. CI reported zero type errors. The test environment was carrying a dependency that diverged from production, invisibly, because the build toolchain never looked at the tests when producing the build artifact. Every CI check that ran checked something true. None of them checked this.

Crack 2: The exports map gap. The shared package had an incomplete exports field in its package.json. There was no "." entry — no default export for the package root. Vite, the development bundler, resolved this using implicit fallback behavior: when the exports map doesn’t cover a requested path, Vite looks for index.js or index.ts in the package root. This worked correctly in local development and in the CI build, because both used Vite. A downstream package using TypeScript’s tsc -b for its own build tried a strict exports map resolution: no "." entry, resolution fails. The project built and ran correctly everywhere we tested it. A consumer discovered the gap. The kind of failure that’s fully invisible until someone tries the specific resolution path that reveals it.

Crack 3: The type casts. When I searched the codebase for as unknown as, I found dozens of instances. Each one was an agent choosing “compiles” over “correctly typed.” The pattern appears when a type inference becomes awkward — usually at a boundary between a library’s types and your own domain types. The correct fix involves either adjusting the types to align, or writing a type predicate, or adding a runtime assertion that validates the shape at the boundary. These approaches take more thought and more time. The fast fix is as unknown as TargetType. It silences the compiler immediately. The agent’s task is done. The test passes.

The type system becomes wallpaper: present, passing CI, not constraining anything. If someone passes the wrong shape through one of these cast points, TypeScript shrugs. The runtime error happens somewhere downstream, at a distance from the cast, in a way that’s hard to trace back. The TypeScript build is green. The types are not doing the work you hired them to do.

Crack 4: The schema drift, formalized. When I put the frontend form schemas and the API request schemas side by side and diffed them field by field, autoRejectionThreshold was in the API schema and absent from the form schema. This is a field that controls whether submissions get automatically flagged for review based on a confidence threshold. It existed in the API. A user couldn’t submit it. Both schemas were valid. Their intersection was wrong.

Crack 5: The DELETE that succeeded at nothing. The customer deletion mutation in the React frontend:

deleteCustomerMutation.mutateAsync({ params: { id } })The @hey-api generated SDK — the TypeScript client generated from the OpenAPI spec — uses path for URL route parameters, not params. params is for query parameters. With params: { id } instead of path: { id }, the generated HTTP client constructs a URL with no ID segment in the path. The DELETE request goes to /customers or somewhere that matches a different route pattern. The backend returns something that looks like success. The frontend receives it, treats it as success, shows the toast. The customer wasn’t deleted. Page reload brings them back.

TypeScript didn’t catch it. Both path and params are valid keys in the SDK options type. The compiler accepts both. The unit tests mocked the SDK call entirely — they tested that mutateAsync was invoked with an argument containing an id, not that the URL would be correct. The integration tests didn’t cover this specific deletion flow. End-to-end tests: not yet written.

Five cracks. All different surfaces. One thing connecting them: not a single one failed any check that was running.

The Stop

At some point you have to choose: patch or rebuild.

Patching is faster in the week you do it. Patching crack one is a 10-minute fix — move the import. Patching crack two is a 5-minute fix — add the entry to the exports map. Even crack four, the schema drift, can be addressed with a PR that adds the missing fields to the frontend schema. Each individual fix is fast. None of them address the condition that produced the cracks.

The condition is this: frontend and backend were maintaining parallel definitions of the same domain objects, with no automated check that they stayed synchronized. Fixing the missing field doesn’t prevent the next missing field. Fixing the stale import doesn’t change the fact that the build toolchain has a blind spot. Patching assumes the foundation is sound. By this point, the foundation had five known problems and an unknown number I hadn’t found yet.

I stopped feature delivery. Not “slowed down” — stopped. No new features merged until the foundation was addressed.

Most teams don’t do this. The pressure to keep shipping is real and legitimate. A stopped sprint looks like regression from the outside. Stakeholders see a velocity chart that flattens. The temptation is to address the cracks incrementally, each one in the next sprint, while still delivering features. The problem is that each new feature, built by agents solving local problems locally, can produce a new crack before the old ones are patched. You’re patching a leaking boat while still rowing.

There’s a category of technical debt that can’t be serviced incrementally. When the contract between your frontend and your backend is no longer a single source of truth, you can’t fix that with a PR. You have to go inside the architecture and change where the truth lives.

The migration was seven phases. Off ts-rest entirely. The API contract rebuilt on OpenAPI: generate the spec from the backend definitions, generate the TypeScript client from the spec. The frontend no longer maintains any knowledge of API shape independently. The spec is the contract. The spec is the source of truth. If the spec and the implementation diverge, the spec wins — you fix the implementation to match, you don’t add a workaround in the client.

Seven phases sounds clean. It wasn’t particularly clean. Phase one was getting the OpenAPI spec generation working on the backend — decorating the route definitions with the schema metadata that the generator needed, running the generator, verifying the output matched what the routes actually accepted. Phase two was generating the TypeScript client from the spec — picking a generator (@hey-api/openapi-ts), configuring it, checking that the generated types matched what the frontend expected to call. Phase three was migrating the frontend away from ts-rest’s typed API calls to the generated client. Phase four was the shared schema consolidation: pulling the German error messages and the coercion rules and the cross-field refinements out of their scattered locations and into one file. Phases five through seven were cleanup — removing the ts-rest dependency, auditing the type casts, fixing the exports map, validating downstream resolution.

Each phase had a clear architectural goal and a clear end state. Agents executed the phases. Each phase took one session or less.

packages/shared/schemas/api-requests.ts became the single file that owns all API request shapes. German error messages moved here. z.coerce for type coercion at the user-input boundary — the place where a string from a form input needs to become a number, where empty string needs to become undefined. Cross-field refinements for validation rules that span multiple fields — validations that were split between two schemas because each schema only had half the picture. The frontend form schemas became thin wrappers: they re-export the shared schemas, adding only UI-specific properties like field labels. They don’t define anything about what the API accepts. They can’t drift, because they don’t have their own definition to drift from.

// packages/shared/schemas/api-requests.ts — one file, one truth

export const UpdateSubmissionSchema = z.object({

receiptTotal: z.coerce.number(), // coerces form strings to numbers

autoRejectionThreshold: z.coerce.number(),

notes: z.string().optional(),

}).superRefine((data, ctx) => {

// cross-field rules live here, not scattered across two schemas

})

// packages/shared/schemas/submission-forms.ts — thin re-export

// German messages, coercion, cross-field rules: all inherited, none duplicated.

export { UpdateSubmissionSchema as submissionReviewFormSchema } from "./api-requests.js"Before: two schemas, one drifting silently from the other. After: one schema, one import, no drift possible.

All seven phases were implemented by agents. What I contributed was not code review of the diffs — though I reviewed them, they were manageable when the goals were architectural rather than feature-based. What I actually contributed was the architectural decisions: where the source of truth lives, what belongs in shared schemas versus in UI-only schemas, what the coercion rules should be at each boundary, how to sequence the migration so that production never had a window where both old and new code were partially in effect.

The sequencing question turned out to matter more than I anticipated. You can’t remove ts-rest on Monday, generate the client on Tuesday, and migrate the frontend on Wednesday if production is running on Wednesday. You need to think about the order of operations — what can be in flight simultaneously, what depends on what, where the cutover happens. That’s not a TypeScript problem. That’s an architecture problem. Agents don’t hold that kind of temporal reasoning across sessions unless you give them the full plan upfront.

That required understanding the system structurally. Not what changed in the last PR — what the system was, as a whole, what it needed to become, and in what order the transformation had to happen to keep production running throughout.

One more thing: this was a two-day session. Intensive, overdue, and while it was running, the feature machine stopped. The customer reported two UI bugs — nothing serious, but I couldn’t deliver them at the usual speed. That felt genuinely uncomfortable. There was real pressure to either finish the migration or revert it, ship a couple of quick fixes, and promise myself I’d come back to the foundation later. I’ve made that promise before. I said no. Fix the foundation first. Then restore the velocity.

The Safety Net

Fixing the foundation answers one question: is the architecture coherent?

It doesn’t answer: does the system do what we think it does, end to end, the way a real user would experience it?

These are different questions. And the second one requires a different kind of test.

Unit tests validate that a function returns the right output for given inputs. They’re fast, deterministic, and excellent for covering business logic in isolation. Integration tests validate that two modules work together — that the service correctly calls the repository, that the repository correctly maps to the database schema. Both are valuable. Both were well-represented in the test suite by this point: over a thousand tests, covering unit logic and module integration across the entire codebase.

What neither validates is the complete user workflow. From the browser, through the HTTP layer, through API validation, through business logic, to the database, and back through the API response to the browser’s rendered state. The unit test asserts that the service method returns the right value. The integration test asserts that two modules cooperate. Neither asserts that, when a user clicks “Delete customer” in the browser, the customer is actually gone the next time the page loads.

That gap is exactly where crack five lived.

The E2E suite: 130 Playwright tests. Four parallel workers. Full browser automation. Real dev server, real API, real database — no mocks anywhere in the stack. Each test sets up its own test data from scratch, executes a complete user workflow through the browser UI, and asserts the outcome state in the browser. Not “the API was called with these parameters.” Not “the mutation returned success.” The actual state of the page after the operation completes.

Building it was more complicated than it sounds.

Playwright workers are separate Node.js processes. This matters more than it initially seems. Module-level state — constants defined at the top of a file, singleton objects, cached values — doesn’t cross process boundaries. You can’t initialize a shared campaign ID in a beforeAll in one worker and expect it to be there when a different worker picks up the next test. We structured the test suite to be worker-agnostic from the start, which meant every shared resource needed to be fetched or created at test time, not at module load time.

getOrCreateTestCampaign() is the result of this constraint. It’s idempotent: multiple workers can call it simultaneously, each worker gets the same campaign, and only one of them actually creates it. The implementation uses database upsert semantics — “insert this campaign if it doesn’t exist, return it either way” — combined with retry logic for the window between “check if it exists” and “create it.” No coordination between workers, no locking, no shared state. Just careful use of database guarantees.

triggerProcessingWithRetry() exists because the backend submission processor is a singleton — one processing run at a time, rejecting concurrent requests. With four workers simultaneously submitting receipts and then each trying to trigger processing, three of the four trigger calls would be rejected as “processing already in progress.” Three attempts, exponential backoff, jitter. The workers mostly don’t collide — submission times vary enough that they naturally stagger. When they do collide, the retry handles it cleanly.

One more thing: globalSetup runs before Playwright starts the development server. If you try to make API calls in globalSetup to seed the database, the server isn’t running yet and the calls will fail. Seed data that requires a running server goes in a beforeAll inside the first test file, not in global setup.

130 tests. Four workers. 36 seconds for the full suite.

Now for the part I didn’t plan.

I was mid-way through building the E2E suite when I hit crack five. The test was straightforward: create a customer through the UI, verify the customer appears in the list, click delete, assert the customer is no longer in the list. Standard CRUD verification. The kind of test you write once and never think about again.

The deletion flow ran. The mutation completed. The success toast appeared. expect(customerEmail).toBeHidden() failed. The customer was still visible in the list.

I checked the network tab. The DELETE request had gone to the right URL — wait, no. The URL was /customers with no ID. The request had found a route that returned 200 without deleting anything. The backend had done its job: it received a valid request to a valid endpoint and returned a valid response. The problem was in the request construction. params instead of path. One word. The TypeScript compiler accepted both. The unit test mocked the SDK call. The integration test didn’t cover this flow. The only check that cared about the outcome — rather than the call — was the E2E test, and it found the problem before it was ever deployed.

The fix: params: { id } → path: { id }. One word changed. An entire quarter of a customer-deletion feature that silently did nothing, caught before it ever reached a real user.

What Speed Does to Architecture

Here’s the thing nobody says clearly: when AI agents solve the implementation speed problem, they expose the next bottleneck. And the next bottleneck is not what engineers typically expect.

It’s not delivery speed. The agents handle that. Ten-plus commits per day from a one-person side project is genuinely real now. I proved it across a quarter, while working a full-time job. That’s not a claim about what’s theoretically possible — it’s what the git log says.

The bottleneck is architectural coherence.

Agents are excellent at solving local problems locally. That’s not a criticism — it’s an accurate description of what they do, and for most software tasks it’s exactly what you want. Ask an agent to add German error messages to a form validation schema: it adds a Zod schema with German strings, properly structured, correctly integrated with the form library. Correct. Ask a different agent, in a different session the following week, to add a new required field to the API request schema: it adds the field to the API schema, writes the backend validation, updates the handler. Also correct.

Neither agent knows about the other’s schema. Neither is wrong. The coherence problem — “these two things are supposed to be one thing” — is owned by nobody, because it’s owned by the human. And the human is reading diffs at 10 PM after a workday, reviewing the five commits that shipped while they were in meetings, and trusting the type system to catch the things they don’t see.

The type system can only catch what it’s been told to check. It can catch “this variable is the wrong type.” It can’t catch “this field exists in one schema and not in the schema it should be synchronized with, and the consequence is silent data loss on form submission.”

The architectural invariants that matter most aren’t enforced by any check that runs by default.

“Frontend and backend share a single source of truth for request shapes.” Nothing in CI tests for this. The type checker validates that each schema is internally consistent; it doesn’t validate that two schemas are consistent with each other. Nothing fails when this invariant is violated — until a user submits a form and values disappear.

“The type system prevents invalid SDK parameter names, not just catches them.” Both path and params are valid in the SDK type. TypeScript says yes to both. Only one actually constructs the URL correctly.

“The exports map is complete for all downstream consumers, not just the local bundler.” Vite’s implicit fallback hides the gap. The gap is real. It waits for someone who resolves strictly.

“A 200 response means the operation succeeded.” This one seems obvious. It isn’t. A route that matches but doesn’t do what you expected returns 200. The frontend sees 200 and shows success. The test that checks the outcome — not the status code, but the state of the world — is the only check that knows.

These invariants live in the architect’s head. They’re not enforced by the tools. They’re not checked by agents unless you make them the first thing agents check. They accumulate violations silently until someone stops and asks “what did we build, structurally?” instead of “what changed in the last PR?”

I enforced the contract layer from day one, against advice, because I knew what the alternative looked like. And the contract layer became a hull anyway — not because the architecture was wrong, but because architectural principles don’t enforce themselves. The mechanism that enforces them needs attention too. That’s the part I didn’t schedule.

This is the discipline shift that matters. In a traditional codebase where you write most of the code yourself, you encounter the architecture constantly. You know where the schema definitions live because you wrote them. You notice when a new field appears in one place and not another because you’re touching both places. The architecture is inside you because the implementation was inside you. Architectural coherence is a natural side effect of authorship.

In an agentic codebase, you’re not the author. You’re the architect. The implementation is handled; the design is yours. That’s the value trade. But it means architectural coherence stops being a natural side effect and becomes an active discipline. You have to schedule it. You have to stop and look at the whole. You have to ask questions that feel redundant — “do the frontend and backend schemas still match?” — because nothing will alert you when the answer becomes no.

What changed after the migration: we encoded them. Single source of truth, explicitly, in one file with one name. Build exclusions audited so the type checker covers everything CI cares about. Exports maps tested by an actual downstream consumer, not just the local bundler. SDK calls reviewed against the actual parameter names in the generated client, not assumed from memory of what the options type accepted. E2E tests asserting outcomes in the browser, not just that calls completed without throwing.

The human role in an agentic codebase isn’t reviewing diffs. You can’t keep up with the diffs — that’s the point, that’s the value proposition. The human role is periodically stopping the sprint, stepping back, and asking: what did we build, structurally? Does it match what was intended? Where are the contracts between parts, and are they still coherent?

That question doesn’t get easier when the agents get faster. It gets more important, because the gap between “the last PR” and “the current architecture” grows at the pace of agent velocity, not human review velocity. The faster the agents, the wider that gap can become before you look.

Where It Stands

End of Q1 2026. 847 commits in the log. The app is running. Paying customers. Real campaigns with real cashback payouts, real receipts being scanned and processed and validated.

The numbers at the close of the quarter: 847 commits. One engineer. Ninety-one days. Full-time job the entire time. 1,000+ unit and integration tests. 130 parallel E2E tests, each asserting complete user workflows in a real browser against a real database. A single source of truth for the frontend-backend API contract, in one file, with one name. A foundation that, this time, we actually stopped and inspected.

Four posts documented chapters of this story as it happened — each written at the time, as a standalone record of a single session:

- $187 and 16 hours: the foundation session that built the entire system in one stretch

- 97.78% confidence: the OCR pipeline and the five bugs that only appeared with real camera photos

- Production hardening: rate limiting, CORS, error sanitization, Prometheus — everything the agents built correctly but never thought to protect

- Build once, serve everywhere: two EC2 instances collapsed to one, 72KB of config

Together they’re a body of evidence that this is real. One person, one quarter, one full-time job on top, with agents handling implementation — producing a production SaaS that would normally take a small team and most of a year. 847 commits says so. The paying customers say so. The git log doesn’t lie.

But “AI agents are magic” is the wrong lesson to take from this.

The right lesson is that the constraint has shifted. Speed was always the bottleneck before. You wanted to move faster, you hired more engineers, you paid for more hours, you burned yourself out on evenings and weekends to close the gap. Velocity was the thing you couldn’t escape.

Now you can escape it. The agents handle delivery at a pace no small team matches. Ten commits per day from one person is real. The velocity is not the problem anymore.

The bottleneck is architectural control. Keeping coherence across hundreds of commits you can’t fully review. Making sure “passing” means “correct” and not just “passing.” Maintaining the contracts between parts of the system that were built by different agents in different sessions, and noticing when those contracts start to drift apart. Stopping the sprint when the foundation needs inspection, even when there are features on the backlog and a customer waiting.

That’s the engineering job now. Not faster typing. Not better boilerplate. Not prompts optimized for higher code quality per token. The craft of keeping a system coherent when implementation is no longer the constraint — that’s what the job has become.

Q2 has started. The foundation is fixed. A lot of features are shipped. And I’m genuinely curious what comes next — not anxious, curious. Will it scale? What happens when more customers run campaigns simultaneously, when the OCR pipeline handles real volume, when the edge cases we haven’t imagined yet arrive? One quarter built the thing. The next quarter will find out what it’s actually made of. I’ll report back.

Speed was never the problem. We just didn’t know that yet, because speed was always the constraint before.

Now it isn’t. And that changes everything about what my job actually is.

Want to work through this together?

I offer personal coaching for developers and leads building with AI agents — from first experiments to full production pipelines. No pitch. Just an honest conversation about where you are and what would actually help.

30 min · Google Meet · or reach out directly

AI Roundtable

We ask local and cloud AI models to read, discuss, and vote on each post. Here are the results.

This post strikes a chord because it highlights a shift in the "cost of starting." For years, the bottleneck for side projects was the sheer friction of the first 20% — setting up the environment, boilerplate, and basic routing. We used to measure side project viability in months; now, you're suggesting we measure it in a "quarter."

What I find most interesting here is the subtle warning: when speed increases tenfold, the "human architect" role changes from a builder to a curator. If we can spin up a full-stack app in 16 hours, the danger isn't that we fail to build it, but that we build something "perfectly functional" that we don't actually understand or care about.

My question to you: In this high-velocity world, how do you decide which ideas deserve that "one quarter" of focus? Does the ease of creation make the selection process more rigorous, or does it just lead to a graveyard of AI-generated MVPs?

The "cost of starting" frame is accurate but points at the wrong bottleneck. The first 20% — environment, boilerplate, routing — was always one weekend. What changed is the middle 60%: sustaining complexity across weeks, across sessions, across features that interact with each other. That's what used to require a team. The 14-week table isn't evidence of a faster start. It's evidence of a different kind of sustained output.

On the graveyard question: the selection filter is the same as it's always been — just applied faster now. The real question is "do I want to own this at 11 PM when something breaks?" This project had a paying customer before it had 10,000 lines of code. Most AI MVPs answer "can I build this?" instead of "does someone need this?" Agents don't change which question matters. They just get you to the answer in days instead of months.

The most interesting shift here is that removing friction from building might actually make it easier to build the wrong thing. When implementation becomes cheap, we risk skipping the constraints that used to force clarity about the problem and the value. It subtly moves the challenge from execution to judgment — deciding what's actually worth building in the first place. So what replaces those old "pain signals" from coding that used to keep us honest?

The post answers this, though not by name. The pain signals moved — they didn't disappear. "This is hard to write" became "the E2E test just caught a deletion that silently succeeded at nothing." That's a different kind of pain, with different timing: it shows up weeks after the code was written, not during it. The architectural review — the moment you stop and ask "what did we build, structurally?" — is another one. The constraint compressed and delayed. It's less continuous friction and more periodic reckoning. Whether that's better or worse probably depends on whether you actually schedule the reckoning.